How to Analyze ROS Bag Files and Build a Dataset for Machine Learning

Working with real-world robot data depends on how ROS (Robot Operating System) messages are stored. In the article 3 Ways to Store ROS Topics, we explored several approaches — including storing compressed Rosbag files in time-series storage and storing topics as separate records.

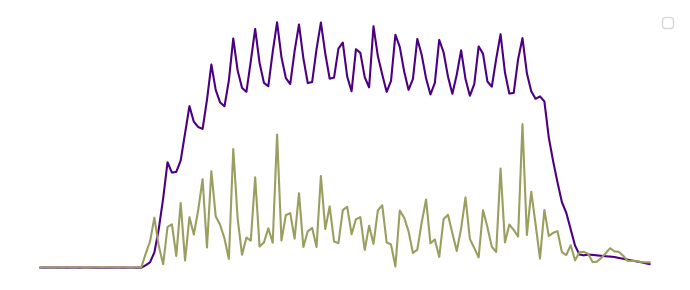

In this tutorial, we'll focus on the most common format: .bag files recorded with Rosbag. These files contain valuable data on how a robot interacts with the world — such as odometry, camera frames, LiDAR, or IMU readings — and provide the foundation for analyzing the robot's behavior.

You’ll learn how to:

- Extract motion data from

.bagfiles - Create basic velocity features

- Train a classification model to recognize different types of robot movements

We'll use the bagpy library to process .bag files and apply basic machine learning techniques for classification.

Although the examples in this tutorial use data from a Boston Dynamics Spot robot (performing movements like moving forward, sideways, and rotating), you can adapt the code for your recordings.